if you work with 640x480 images, every observation has 640*480=307200 variables, which results in a 703Gb covariance matrix! That's definitely not what you would like to keep in memory of your computer, or even in memory of your cluster. On another hand, sometimes, when you have too many variables, the covariance matrix itself will not fit into memory. This matrix will have all the same properties as normal, just a little bit less accurate. So you need only to build the covariance matrix without loading entire dataset into memory - pretty tractable task.Īlternatively, you can select a small representative sample from your dataset and approximate the covariance matrix. With m=1000 variables of type float64, a covariance matrix has size 1000*1000*8 ~ 8Mb, which easily fits into memory and may be used with SVD. Indeed, typical PCA consists of constructing a covariance matrix of size m x m and applying singular value decomposition to it. When you have too many observations, but the number of variables is from small to moderate, you can build the covariance matrix incrementally. Typically problems with memory come from only one of these two numbers.

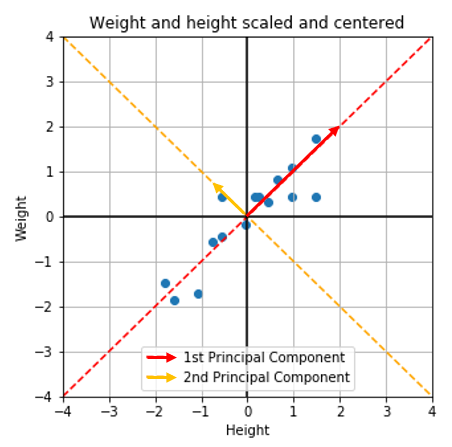

(I personally like to interpret word "online" as a reference to some infinitely long source of data from Internet like a Twitter feed, where you just can't load the whole dataset at once).īut what if you really wanted to apply dimensionality reduction technique like PCA to a dataset that doesn't fit into a memory? Normally a dataset is represented as a data matrix X of size n x m, where n is number of observations (rows) and m is a number of variables (columns). Roughly speaking, instead of working with the whole dataset, these methods take a little part of them (often referred to as "mini-batches") at a time and build a model incrementally. In your concrete case it's more promising to take a look at online learning methods. With 8 variables (columns) your space is already low-dimensional, reducing number of variables further is unlikely to solve technical issues with memory size, but may affect dataset quality a lot. First of all, dimensionality reduction is used when you have many covariated dimensions and want to reduce problem size by rotating data points into new orthogonal basis and taking only axes with largest variance.